- #THE TWO DATA CURVES ON THE FIGURE ILLUSTRATE THAT INSTALL#

- #THE TWO DATA CURVES ON THE FIGURE ILLUSTRATE THAT SERIES#

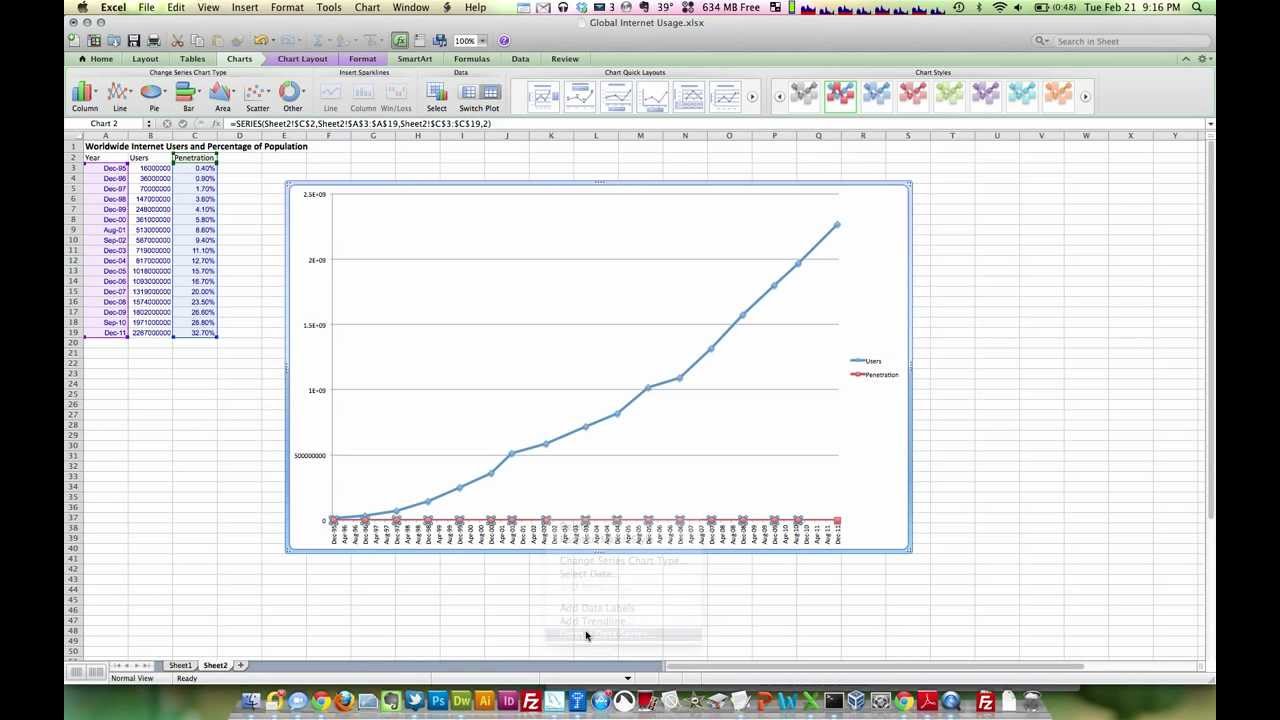

If we wanted to generate a plot for all of the curves per well we could save each one out as a file or present the data in a single column. This allows you to see where the gaps are in your key curves. In my previous article: Visualising Well Data Coverage Using Matplotlib I covered a method of viewing the data coverage on a well by well basis. Missing Data Visualisation Using Matplotlib With a quick glance at the matrix plot and bar chart, you can get a sense of what data is missing from your dataset, especially if you have a large number of columns in your dataset. Missing value counts from msno.bar(data). The matrix plot can be called by: msno.matrix(data) The missingno toolbox contains a number of different visualisations, but for this article we will focus on the matrix plot and bar plot.

#THE TWO DATA CURVES ON THE FIGURE ILLUSTRATE THAT INSTALL#

If you don’t have this library, you can quickly install it using pip install missingno into your terminal. More details on the library can be found at. The first method we will look at is using the missingno library, which provides a nice little toolbox, created by Aleksy Bilgour, as a way to visualise and understand data completeness. We will look at 2 ways to visualise missing data. There are a number of ways that we can use to identify where we have data and where we don’t. Issues arising from the borehole environment.tools not run due to budgetary constraints) Missing data within well logging can arise for a number of reasons including: This returns an array object with the well names. # Gets the unique names from the WELL column data.unique() Once the data has been loaded, we can confirm the number and names of the wells using the following commands: # Counts the number of unique values in the WELL column data.nunique() data = pd.read_csv('Data/xeek_train_subset.csv') To load the subset of data in we can call upon pd.read_csv.

The data has already been collated into a single csv file with no need to worry about curve mnemonics. In order to keep the plots and data manageable in this article I have used a subset of 12 wells from the training data. The objective was to predict lithofacies based on the log measurements. The objective of the competition was to predict lithology from a dataset consisting 98 training wells each with varying degrees of log completeness. The dataset we are using forms part of a Machine Learning competition run by Xeek and FORCE ( ). import pandas as pd import matplotlib.pyplot as plt import seaborn as sns import math import missingno as msno import numpy as np The first step for any project is loading the required libraries and data.įor this workthrough, we are going to call upon a number of plotting libraries: seaborn, matplotlib and missingno as well as math, pandas, and numpy.

#THE TWO DATA CURVES ON THE FIGURE ILLUSTRATE THAT SERIES#

The notebook for this article can be found on my Python and Petrophysics Github series which can accessed at the link below: Visualise data affected by bad hole conditions.Identify where we have or don’t have data.The visualisations we will cover will allow us to: We will be visualising the data using a mixture of matplotlib, seaborn and missingno data visualisation libraries. In this article we will use a subset of the dataset that was released by Xeek and FORCE as part of a competition to predict facies from well logs. Python provides a great toolset for visualising the data from different perspectives in a quick and easy way. In this stage, we gain an understanding about the data and check whether further processing is required or if cleaning is necessary.Īs petrophysicists/geoscientists we commonly use log plots, histograms and crossplots (scatter plots) to analyse and explore well log data. This step allows us to identify patterns within the data, understand relationships between the features (well logs) and identify possible outliers that may exist within the dataset. Once data has been collated and sorted through, the next step in the Data Science process is to carry out Exploratory Data Analysis (EDA).